Big O Notation | Time Complexity and Space Complexity

Time Complexity and Space Complexity

Time Complexity

Time complexity is a concept that predicts the execution time of source code logic and shows how efficient the code is. Execution time increases in proportion to operations. It is related to CPU usage.

Space Complexity

Space complexity is a concept that describes how efficiently code uses memory space. It often appears with data structures that reserve space in advance, such as static arrays or hash tables. It is related to RAM usage.

Source code logic must work first, but whether the code is efficient is determined not only by behavior but also by calculating time and space complexity.

What Is Big O Notation?

Big O is a representative way to mathematically express time and space complexity. However, it does not show the actual runtime of code. It is used to logically predict algorithm performance according to the growth rate of input data. Big O notation has the following two rules.

Keep only the highest-order term.

O(n² + 2n) -> O(n²)

Discard coefficients and constants boldly.

O(4n + 5) -> O(n)

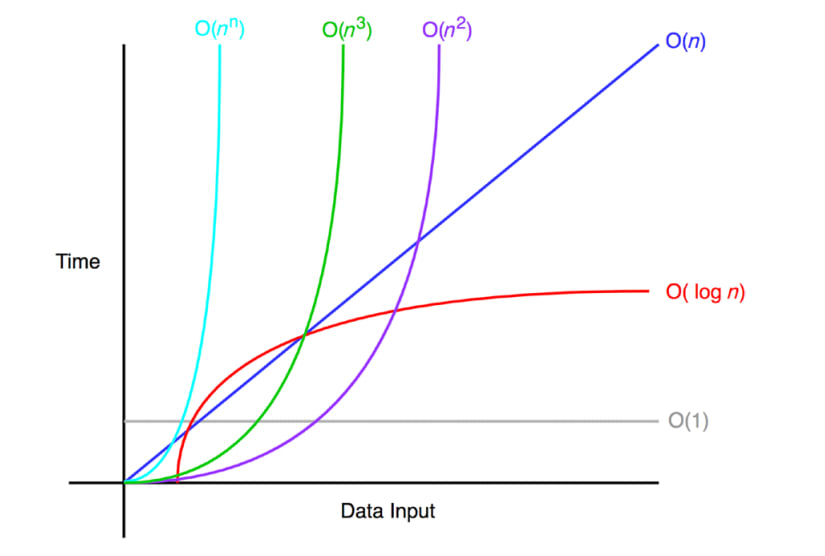

Classifying Time Complexity with Big O

O(1) (Constant)

Represents an algorithm that always takes a constant time regardless of input size. Even if data increases, performance is barely affected.

O(log₂ n) (Logarithmic)

An algorithm whose processing time grows logarithmically as input size increases. For example, if the data becomes 10 times larger, processing time doubles. Binary search is representative, and some beneficial recursive cases also apply.

O(n) (Linear)

An algorithm whose processing time increases in proportion to input size. For example, if the data becomes 10 times larger, processing time also becomes 10 times larger. Linear search is representative.

O(n log₂ n) (Linear-Logarithmic)

An algorithm whose processing time increases by a logarithmic factor as data grows. For example, if the data becomes 10 times larger, processing time becomes about 20 times larger. This is the average time complexity of sorting algorithms such as Merge sort and Quick sort.

O(n²) (Quadratic)

An algorithm whose processing time increases rapidly as data grows. For example, if the data becomes 10 times larger, processing time can become up to 100 times larger. Nested loops, or an n² matrix, are representative. However, when m is smaller than n, it is better to write O(nm).

O(2ⁿ) (Exponential)

An algorithm whose processing time increases exponentially as the amount of data grows. The Fibonacci sequence is representative, and recursive algorithms that work inefficiently also fall into this category.

How to Calculate Time Complexity

Write down the number of executions whenever an instruction ends.

Basic Calculation

public static void basic() {

int[] a = {7, 3, 6, 3, 3, 5, 6, 9}; // 1

for (int i = 0; i < a.length - 1; i++) { // n [i > 0 to n-1 : n times called]

for (int j = i + 1; j < a.length; j++) { // (n-1) * n [j > 1 to n : n times called]

if (a[i] == a[j]) { // (n-1) * (n-1) [if statement eventually runs n-1 times]

a[j] = 0;

}

}

}

for (int i : a) {

System.out.println(i);

}

}

Adding all instruction counts gives 2n²-2n+2. Omitting constants and considering only the highest-order term, it is written as O(n²).

Calculating Recursive Function Time Complexity

public static int factorial(int n) {

System.out.println("n:" + n);

if (n == 1) {

return 1;

}

return n * factorial(n - 1);

}

The recursive call is made n times as shown below, so it is written as O(n).

factorial(n) -> factorial(n -1) .... ->factorial(2) -> factorial(1)