HTTP 2.0

HTTP 2.0

HTTP/2, also called HTTP 2.0, stands for Hypertext Transfer Protocol Version 2. It is the next version of HTTP/1.1, the existing standard, and was officially published by the IETF in 2015.

IETF: Internet Engineering Task Force means the international Internet standards organization. It is an Internet standardization working group that discusses Internet operation, management, and development, and analyzes protocols and architectural issues.

HTTP/2 operates on a TCP connection between the server and client. The client initializes this TCP connection. HTTP/2 requests and responses are contained in one or more frames with a defined length (maximum 16,383 bytes). Requests and responses in frames are sent through streams, and one stream handles a pair of request and response. Because multiple streams can be created simultaneously over one connection, multiple requests and responses can be processed at the same time. HTTP/2 also provides flow control and prioritization for streams. If the server thinks a resource is needed by the client, it can actively send it even without an explicit request.

SPDY

SPDY is a non-standard network protocol developed by Google. As the web environment continued to change, with more resources, many domains, dynamic web services, and the growing importance of security, it was designed to speed up HTTP by focusing on solving latency problems on the Internet based on packet compression and multiplexing. It was Google’s own protocol, built into early Chrome browsers, that provided high loading speed.

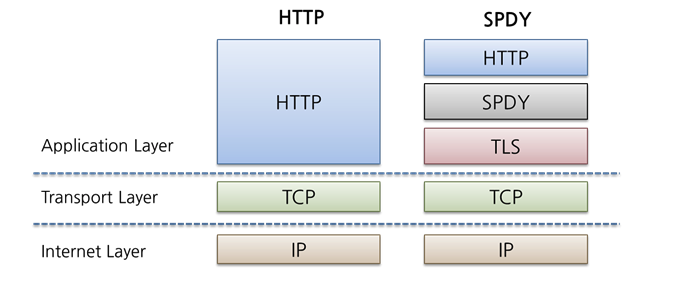

HTTP/2 is based on SPDY and introduces a new binary layer below the HTTP protocol layer in the TCP communication layer, pursuing better efficiency for the TCP connections that HTTP depends on.

SPDY showed significant performance improvements and efficiency compared with HTTP/1.1 and became a reference specification for the HTTP/2 draft.

SPDY’s characteristics include:

- Always operates on TLS

- Applies only to websites written with HTTPS.

- HTTP header compression

- The more requests there are, the larger the compression ratio becomes, and the effect is large in mobile environments with low bandwidth.

- Binary protocol rather than text

- Parsing is faster and errors are less likely.

- Multiplexing

- Processes multiple independent streams simultaneously in one connection.

- Full-duplex interleaving and prioritization

- Allows other streams to interleave.

- Server Push

Ultimately, SPDY can be seen as modifying HTTP’s data transfer format and connection management so TCP connections are used more efficiently. SPDY became a reference specification for HTTP/2, and most of these characteristics also exist in HTTP/2.

Main Characteristics

The main characteristics aim to improve performance.

Packet Capsulation

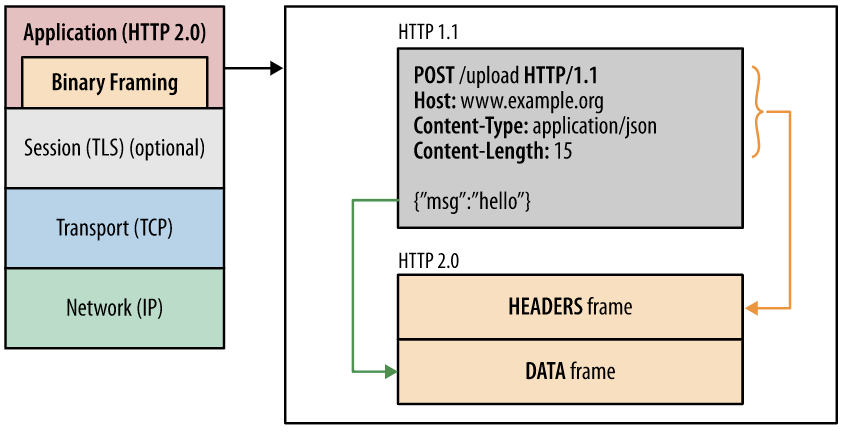

As HTTP/2 packets are encapsulated into smaller units, the concepts of Frame, Message, and Stream are introduced.

- Frame

- The smallest communication unit in HTTP/2. Every packet includes one frame header.

- This frame header at least identifies the stream to which the frame belongs.

- HEADERS type frames and DATA type frames exist.

- Message

- The full sequence of frame data mapped to a logical request or response message.

- Stream

- The flow of a connection. It is a bidirectional flow of bytes delivered within an established connection, and one or more messages can be delivered.

All HTTP/2 connections are TCP-based streams and communicate messages with frame headers in both directions.

Data is binary encoded, and multiplexing plus performance optimization algorithms are applied.

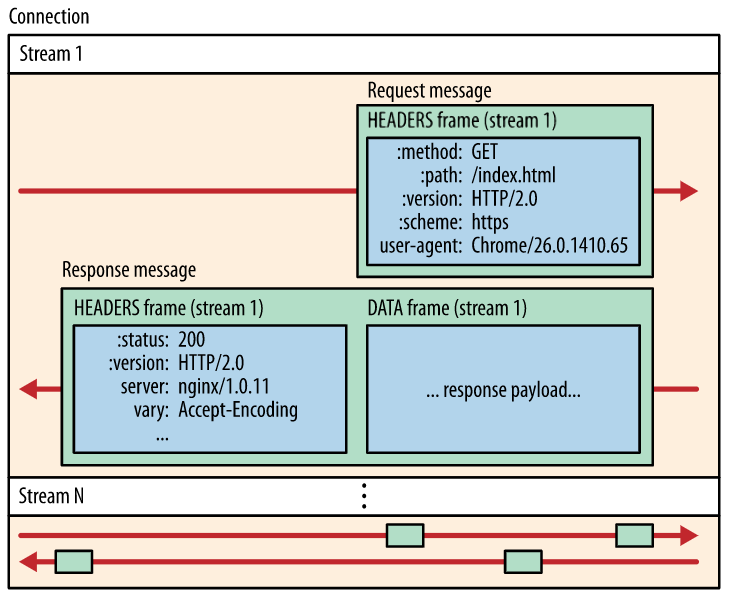

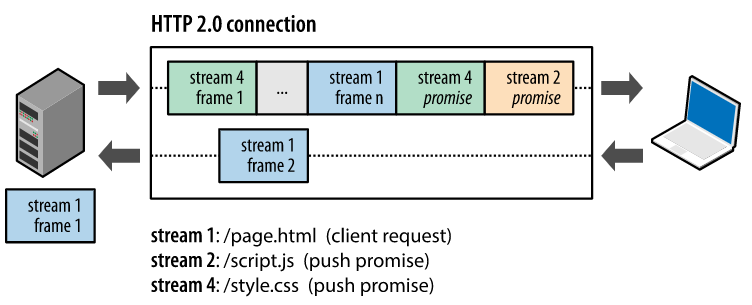

Request Multiplexing

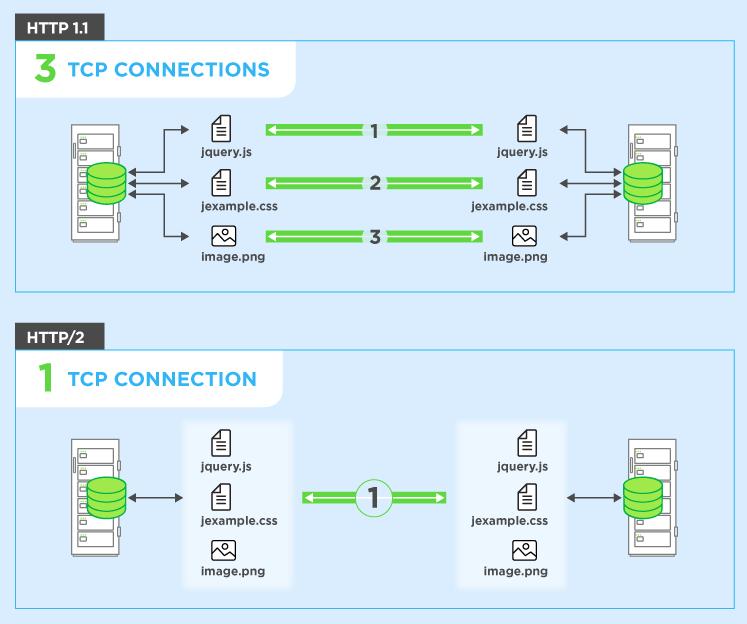

HTTP/2 can send and receive multiple data requests in parallel through one TCP connection.

A stream is an independent bidirectional sequence of frames exchanged between client and server through an HTTP/2 connection. A pair of HTTP request and response is made through one stream. The client creates a new stream and sends an HTTP request; when the server responds on the same stream, that stream is closed.

In HTTP/1.x, after sending a request over one TCP connection, another request could not be sent over the same TCP connection until the response arrived. In HTTP/2, multiple streams can be opened simultaneously in one connection, so multiple requests can be sent at the same time.

This reduces additional RTT (round-trip time), loads websites faster without extra optimization, and makes domain sharding unnecessary.

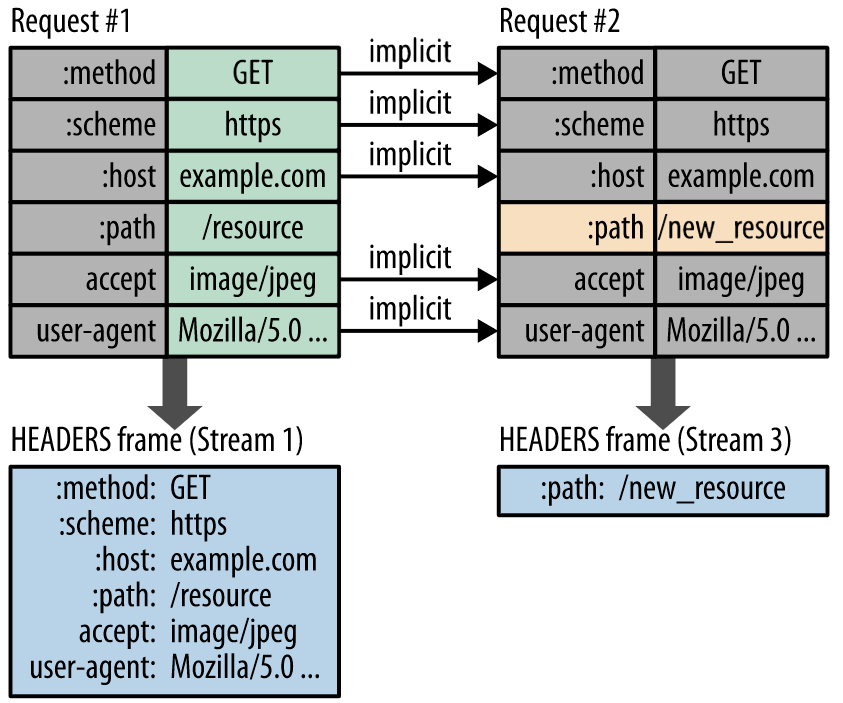

Header Compression

In HTTP/1.1 and earlier, HTTP headers were sent without compression. Older web pages did not send as many requests as today, but modern web pages send countless requests, so header size affects both round-trip delay and bandwidth.

HTTP/2 compresses HTTP message headers and avoids retransmitting duplicate fields.

- HTTP headers were plain text before, but HTTP/2 uses a header compression method called HPACK, which uses Huffman coding, to improve data transfer efficiency.

- Headers are split into “header block fragments,” sent, rejoined by the receiver, decompressed, and restored to the original header set.

Huffman coding is an algorithm that uses codes of different lengths depending on the frequency of data characters.

Binary Protocol

The latest HTTP version greatly improved functionality and properties by changing from a text protocol to a binary protocol. HTTP/1.x processed text commands to complete request-response cycles, while HTTP/2 uses binary commands (1 and 0) for the same work. This reduces frame-related complexity and simplifies implementation.

Benefits of the Binary Protocol

- Data parsing is faster and less error-prone.

- Network resources can be used more effectively.

- Network latency is reduced and throughput improves.

- Security issues related to text characteristics are addressed.

- Other HTTP/2 features such as compression, multiplexing, prioritization, flow control, and efficient TLS processing are enabled.

Server Push

HTTP/2 allows the server to send files that the client will likely need, such as JavaScript, CSS, fonts, and image files, together with a single HTTP response even if the client did not explicitly request them.

This is useful when the server can predict which resources the client will require. When the server receives a request for an HTML document, it can push resources linked by that document, such as images and CSS files, to the client. This reduces traffic and round-trip delay caused by the client parsing the HTML and requesting required resources again.

Benefits of Server Push

- The client stores pushed resources in cache.

- Cached resources can be reused across multiple pages.

- The server can send pushed resources together with requested information using multiplexing.

- The server can prioritize pushed resources.

- The client can manage optional resources, reject pushed resources, or disable server push.

- The client can limit the number of multiplexed push streams.

Stream Prioritization

Streams can have priorities. In other words, the client can specify its preferred way of receiving responses.

For example, if a document contains one CSS file and two image files, receiving the CSS file later than the images may cause rendering problems. HTTP/2 can solve resource loading problems by setting priorities based on dependencies between resources.

Every stream also has a unique identifier. A stream identifier once used in a connection cannot be reused.

References

- HTTP2 | Github

- Introduction to HTTP/2

- HTTP/2: the difference between HTTP/1.1, benefits and how to use it