MCP (Model Context Protocol)

MCP Overview

Model Context Protocol (MCP) is an open standard protocol that helps AI, especially large language models (LLMs), interact effectively with external data sources and tools.

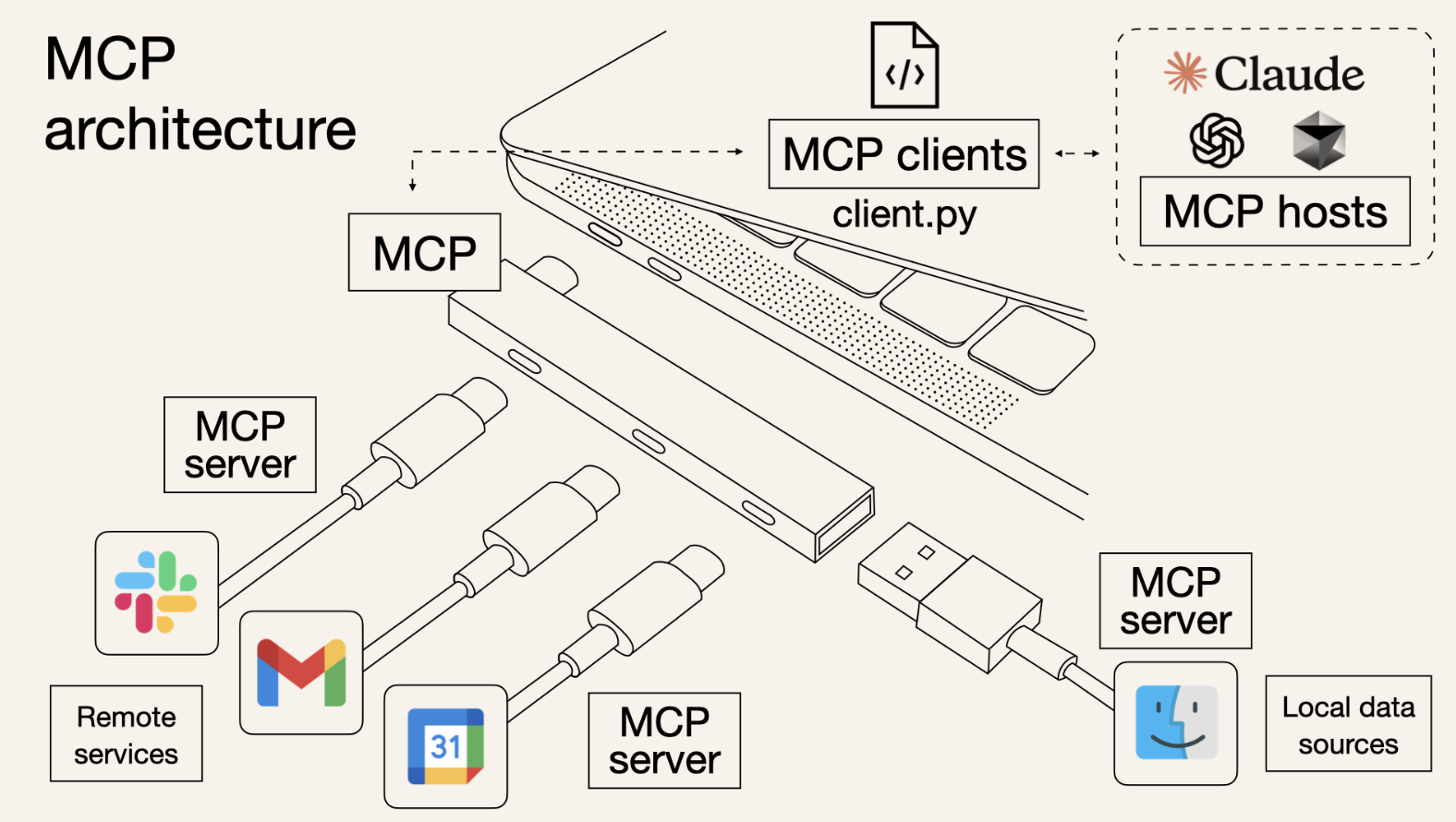

This protocol is designed so applications can deliver context to LLMs in a consistent way. In short, it is sometimes described with the metaphor of a USB-C port for AI. Just as USB-C connects many devices in a unified format, MCP connects AI models with many resources in a standardized way.

In other words, it is a common interface that allows AI models to connect with various external systems.

Main Features

-

Standardized interface

- Provides a common protocol that allows models to access “data sources / tools / applications.”

-

Pluggable structure

- It is not tied to a specific application. Any model that supports MCP can be extended in the same way.

-

Security and control

- Designed to limit the scope a model can access and allow access only to resources approved by the user.

-

Developer friendly

- Can be used commonly across several AI models such as OpenAI and Anthropic, so “an MCP tool built once can be used anywhere.”

Examples

-

When a model needs a “database query”:

Model -> MCP -> DB Adapter -> Database -

When a model needs to “call a web API”:

Model -> MCP -> HTTP Adapter -> External REST API

In other words, MCP can be viewed as a foundational technology that standardizes the plugin ecosystem for AI.

Background and Need

AI models are essentially text-based in their input and output. However, real-world use requires many tasks such as DB lookups, API calls, and file input/output. Until now, individual solutions such as plugins, LangChain, and custom API bridges had to be used. MCP packages these into a standardized protocol.

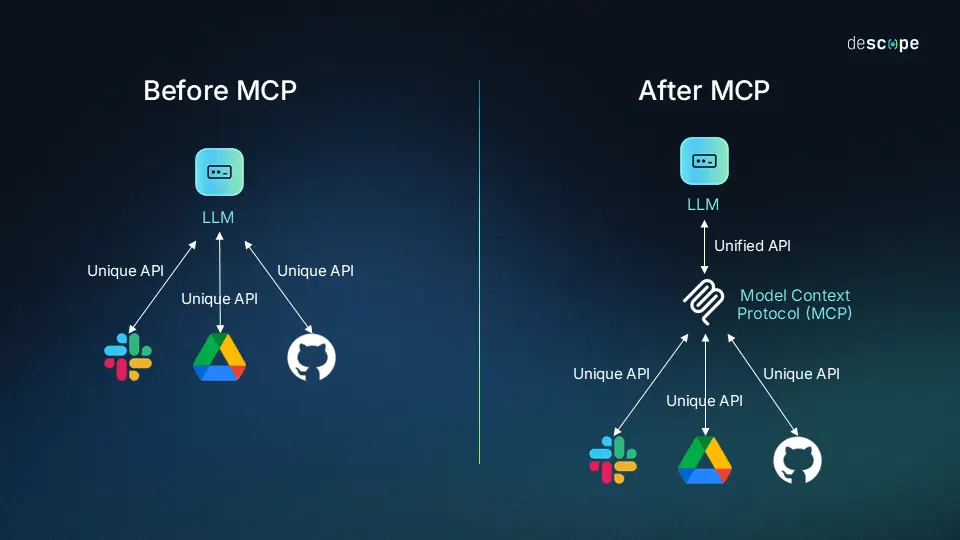

Previously, for AI applications to interact with external systems, custom integrations were needed for each model and each tool. This greatly increased development and maintenance complexity, which was described as the M x N problem. MCP simplifies this into an M + N structure, helping AI applications connect with various tools in a standardized way.

- Image source: https://www.descope.com/learn/post/mcp

Architecture and How It Works

Client-Server Structure

MCP adopts a client-server architecture.

- An MCP client is an AI application, such as Claude Desktop.

- An MCP server provides external resources such as file systems, databases, and APIs.

Communication Method

MCP exchanges requests and responses based on JSON-RPC 2.0, which improves interoperability through a standardized message exchange method. It also supports both local inter-process communication based on stdio and HTTP + SSE (Server-Sent Events)-based communication.

Role of the Server

An MCP server performs the following functions.

- Tool Registry: Manages the list of available tools and functions

- Authentication: Verifies access permissions

- Request Handler: Handles client requests

- Response Formatter: Processes results into a format the AI model can understand

An AI application can request the server’s “list of available tools” and then select and use an appropriate tool based on that list.

Developer Friendliness and Extensibility

Anthropic released MCP as an open source standard and provides SDKs for major languages such as Python, TypeScript, Java, Kotlin, and C#. This offers the advantage that client and server implementations are generally simple.

Effects and Benefits

- Clear instructions: It is possible to clearly specify what data the LLM should handle

- Removal of ambiguity: Multiple information sources can be clearly distinguished and referenced

- Support for specialized processing: Dedicated processing for specific data formats is possible

- Context examples: Various contexts such as file systems, databases, and cloud services can be used together

Thanks to these benefits, AI can expand its range of activity and provide more accurate, context-aware responses.

Adoption Timing and Ecosystem Trends

- MCP was released as open source by Anthropic in November 2024, and adoption by developer communities and major AI tools increased rapidly from early 2025.

- There are also mentions of the release of an official SDK for C#. Many MCP servers are currently operating, and security architecture and data protection have also become important concerns.

Summary Table

| Item | Description |

|---|---|

| Definition | An open standard protocol that connects AI models with external data and tools |

| Background | Resolves the complexity of individual integrations and secures more flexible extensibility |

| Structure | Client-server structure, JSON-RPC, standardized message exchange |

| SDK | Provides Python, TS, Java, Kotlin, C#, and more |

| Benefits | Greater clarity, extensibility, automation, and security |

| Current trend | Growing rapidly since release, with expanding security and practical use |

If you have more questions, feel free to ask. For example, I can also explain MCP server implementation examples or technical usage flows.